The Next Generation May Mark Another Bifurcation in Human Evolution: On AI's Threat to Education and Humanity

AI benefits our generation—that fact may have bred in us an unfounded confidence that it will benefit the next generation as well.

AI Benefits Us Because We Grew Up Without It

Today, everyone is enthusiastic about AI, whether as an entertainment tool or a productivity tool. AI certainly presents challenges, but its usefulness is undeniable.

Why does our generation believe it has benefited? Because we grew up without AI. Education is the most important formative experience. The core of modern education is essentially organizing language. When asked “How should postpartum care for sows be handled?” answering “Metals can also experience fatigue” would be wrong. Answering “Proper ventilation and sterilization should be maintained” would be closer to correct. The first answer is wrong only because, in your study materials, the phrase “Metals can also experience fatigue” never appeared anywhere near the topic of postpartum sow care. Our brains possess a remarkable ability to organize “correct sentences” from an overwhelming mass of linguistic materials.

But this has nothing to do with genuine understanding of the world. When we study history, geography, politics, physics, biology, chemistry, and so on, we are first and foremost learning the linguistic expressions that previous generations arrived at through their exploration of the world. It is only at the graduate level that we get the chance to explore the world directly ourselves—performing astronomical calculations firsthand, observing protein structures personally, and so forth. Except in the domain of everyday life, most people’s intellectual activities are, in fact, not substantially different from what AI does. 1

What people previously took for granted as “uniquely human intellectual activity”—work that amounts to producing correct sentences—is now like computing “3689011 to the power of 10 to the 22nd”: machines can do it faster and more accurately than most people.

Whether AI will ultimately achieve consciousness, and how its persistent memory capabilities will be implemented—these questions remain genuinely unresolved. So it is premature to claim that AI has surpassed human beings in intellectual activity. Yet this seems to have given us a kind of confidence: AI will continue to benefit us—not only our generation, but the next one as well.

But this confidence comes with a caveat. We imagine that the next generation’s education must focus more on creative, experiential intellectual and artistic training, so that before AI achieves consciousness and embodiment, humanity can keep AI firmly in hand as a convenient tool. This is what I said in another article: the way to avoid or slow down being replaced by AI is to become more human-like.

However, two fundamental challenges are fast approaching:

First, AI is becoming better than most people at an increasing number of intellectual activities.

Second, the very existence of AI will soon make traditional education broadly unsustainable.

These two problems are tightly intertwined. If the first point becomes reality, most of what universities produce—university graduates—will no longer be needed by society. When a product doesn’t sell, the factory must shut down. Once universities, which sit at the top of the modern educational chain, break, secondary schools will lose their reason to exist. Perhaps only elementary schools will retain some purpose.

Of course, things may not be so pessimistic. Perhaps only some universities will close; perhaps only traditional methods of education need to change. But the biggest problem may not be how to change—it may be whether there is any motivation to change at all.

Learning Is a Process of Continuous Trial and Error, and of Continuously Building Neural Connections

If you are a highly motivated learner, or you hold advanced degrees, or you have continuously pushed the boundaries in your field, look back at your educational journey. What was the most important thing in all of that?

If I were to answer this question, I would say: trial and error.

For those studying the natural sciences, even the tiniest theoretical conjecture about the composition and operation of the world must be verified again and again through experiment. For those studying the humanities and social sciences (which I will, somewhat imprecisely, abbreviate as “the humanities” below), the situation is even more difficult. They have no formulas, no fixed objects of study. A legal philosopher, for instance, must simultaneously handle concepts, propositions, and theories that overlap but are not isolated—spanning different levels, eras, languages, schools of thought, texts, and regions.

I said long ago that “the humanities have a low floor but a high ceiling.” Those who truly master the humanities may be more formidable than those who master the natural sciences. The humanities are quintessentially language-oriented disciplines that often do not directly confront the world. Language is extraordinarily complex. A clear piece of thinking in the humanities must at least answer these questions:

- What is the entity or object you are discussing (what exists)?

- What properties do these entities have, or what relationships do they bear to other entities (what properties or relations obtain)?

- What phenomena or consequences do these properties or relations produce (what phenomena or results follow)?

These are often the starting points of scientific study, which begins with mathematization and modeling. Yet these are precisely the endpoints of humanistic research. Much of philosophical activity does just one thing: it continuously reveals the “truths” of our linguistic practices—“Nations are imagined communities,” “Our language creates metaphysical illusions for us,” “The concept of gender is entirely socially constructed.”

I am not saying, of course, that all problems in the humanities are merely linguistic problems, divorced from the world. I am saying that the humanities deal more precisely with the interface or zone where language and the world interact. In a sense, linguistic activity is the most important social activity. In social practice, we more often make the “World conform to the Word,” rather than the other way around, as in natural science, where we make the “Word conform to the World.”

I believe everyone who has studied the humanities has had this experience. Language is like a tangled skein, while the world appears and disappears. Language is not only complex but full of rubbish. Learning and research are like searching for recyclables in a landfill with a lantern. And at any given moment, our reading is finite, yet we must form definite views. We need to advance in our studies, graduate, publish papers, get promoted—and for that we must assert immediately, we must write immediately. What makes things even more difficult is that more is not always better. As our research progresses, we must find a definite direction and discover a genuine problem. So we must not only distinguish quality content from the vast ocean of literature, but further refine our selection from among the good sources.

And we must keep struggling to write, wrestling with the practice of writing.

Here is how I would put it: throughout this entire process, we are continuously making trial-and-error attempts, and it is precisely through errors that we make progress. Nothing gives us genuine understanding more effectively than our own past mistakes.

Think about it: one day, based on the literature available to us, we arrive at a view that feels absolutely certain. Then, as time passes, as our access to literature changes, and as our thinking advances, we discover that our earlier view was wrong. The result is that we step out of that “error” and move in what now appears to be the “correct” direction. I must say that for a researcher in the humanities and social sciences, few scenarios are more beautiful than this.

Looking back on my own decade of intellectual searching, there was a period when I kept asking myself: Is my reading method correct? Where should I begin? I thought about these questions for a full year or two, and even wrote tens of thousands of words of reflection and summary at different stages. 2 Finally, I felt I had found a study method that gave me confidence. But that was only the beginning—a good preparation. A great deal of substantive exploration still lay ahead, repeating the endless cycle of trial and error, involving not only reading but also writing. Most of the academic articles I have published on this blog now seem to me outdated, requiring new articles to replace them. When I first graduated, I thought my doctoral dissertation was a work well worth publishing. But merely two years later, as my thinking advanced, I came to feel that it could, at best, be considered an incomplete exposition at a certain level.

Such is the charm of error. We truly do step from one error into another. I have long wanted to write an article celebrating error. Whatever our stance or role, we should be tolerant—even welcoming—of our own and others’ mistakes, so long as they do not harm others (and even when they do cause harm, are unwelcome, and deserve condemnation or punishment, they remain an enormous personal treasure for the person who made them).

Error Is Part of What Makes Understanding Correct

What is the essential nature of error in intellectual exploration? It is not only what is commonly understood as the formation of thought—it is also a process of physiological shaping. Intellectual activity is itself a physiological activity, because as we think, the brain is simultaneously attempting to establish new neural connections. When we feel that our thinking has advanced to a certain stage, our neural connections have, in fact, found a direction (though we cannot yet observe them directly). Our thinking manifests as a kind of order—the order of language; and our neural connections also form an order, a genuine physiological order.

The key insight is this: when we move from one “error” to something “less incorrect,” we do not completely lose the previous “erroneous” neural connections. We simply mark them and prune a branch. Neural connections are a physiological fact—they have no inherent distinction between error and correctness. We can imagine that the old order formed by the original neural connections, and the new order formed by the branching neural connections, will exist as a contrast. That erroneous neural pathway will always serve as a reference or background for the correct branching connection.

Our knowledge does not take the form of a single line, but of a three-dimensional network extending in all directions. The neural order formed by countless major and minor erroneous pathways, and the various connections established with it in various ways, constitute an indispensable background. We can imagine that perhaps every “correct” pathway has not just one but multiple parallel “erroneous” pathways.

This is why “failure is the mother of success” is not merely a matter of thought—it is a neurological fact.

Error constitutes the most important background of correctness, and this background does not disappear. Once it does disappear, correct views become thin and reduced to mere linguistic arrangement. One can never give someone a correct sentence and thereby make them understand a problem.

If a person wants to become an expert in some field, or at least possess a stable intellectual order and a basically correct orientation, they must have gone through a series of trial-and-error experiences. This process takes years or even decades. It is one of struggle, exploration, bewilderment, and even pain. Learning cannot be purely joyful, because the process of establishing neural connections is itself enormously energy-consuming—it is a process accompanied by intense metabolic activity and inflammatory responses.

A Generation That Loses the Motivation to Learn May Also Lose Its Humanity

The problem is now clear. When AI can perform better and faster than most people at intellectual activities once considered “uniquely human,” what motivation will most people have not to use it? If AI can already do the work, why should humans expend the effort to go through such a painful, prolonged, friction-filled process? This is a test of human nature: if the answer is right there during an exam, how many people would resist peeking? If an exam tests questions you’ve seen before, how many could resist memorizing the questions and drilling on practice sets, rather than genuinely studying the textbook?

One of the terrifying things about AI is that it will deprive our descendants of the motivation to learn. Learning is a process of confusion, failure, misunderstanding, correction, waiting, settling, and internalization. A person’s humanity contains all of these feelings, and these feelings must, of course, be continuously organized, expressed, and conveyed through language.

Once learning ceases and the work of thinking—or rather, the work of producing correct sentences—is handed over to AI, what changes is not merely what we ordinarily understand as intellectual views. It is a matter of humanity, including our physiological makeup.

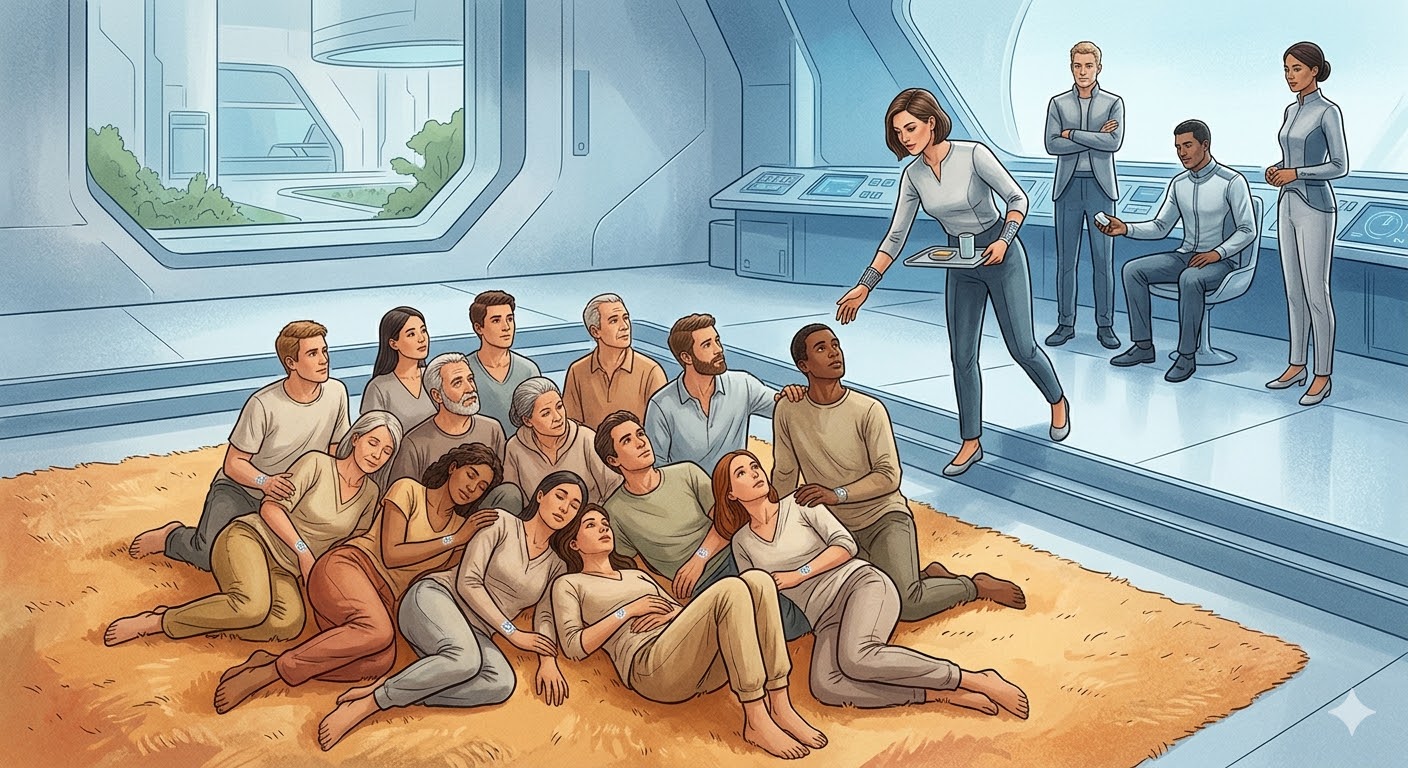

Consider two people: one who has gone through the traditional learning process, whose brain has a specific internal structure, with all traces of trial and error preserved to some degree. When presented with a question or problem, she can think, answer, and explore along that direction. The other has not gone through the traditional learning process, but with AI’s help, can arrive at conclusions just as good as—or even better than—the first person’s, and can continue to make deeper explorations in that direction with AI’s assistance.3

At least in terms of results, there is no difference. If a person can, without undergoing the traditional learning process, continuously organize correct sentences and continuously construct correct expressions about the world through those sentences, with AI’s help, then what is the problem? The only cost is that the person themselves has no idea—they are essentially cheating.

Of course, long-term reliance on AI may train, strengthen, and stabilize quite different neural pathways for their attention structures, memory structures, problem-decomposition abilities, language-generation abilities, and error-correction abilities. Yet whether we can truly shift humanity from personally thinking to supervising thinking remains an open question. There is no precedent for this in all of human history. If you are not good at thinking, how can you be good at supervising thinking? I have already pointed out that being good at thinking is precisely the result of continuous trial and error.

The Essence of Education Lies Not Only in Transmitting Knowledge, But in Enriching Humanity

This brings us back to the question of the essence of education. Modern education aims to transmit knowledge and enable us to cope with nature and society. Knowledge is carried by language. For those who receive a traditional education, learning to “produce correct sentences” remains the most important skill.

But at the same time, the essence of education is not merely transmitting knowledge—it is about enriching humanity. Physics tells us that everything in the world is composed of smaller fundamental units. It does not merely tell us a so-called fact; it helps us understand the universe we inhabit. Understanding this universe is understanding ourselves; understanding ourselves is understanding life—and this shapes and transforms life experience. A person who knows these things about modern physics and a person who does not will have fundamentally different life experiences.

The same is true of history, politics, and literature. It is true that their primary goal is to teach us to organize correct sentences, to transmit and advance knowledge, and to enable us to practice better. But on another level, they also change our humanity—or rather, they extend, expand, and enrich our life experience.

Yet with AI’s rapid progress, the traditional learning process is losing not only its market but its motivation. This is a challenge for education, and it is a challenge for humanity.

Will the Next Generation Be the Beginning of a New Evolutionary Branch?

In a previous article, I said that if AI replaces most of the workforce, most people will lose their jobs. But because material wealth may grow enormously due to the efficiency AI brings, these people will not starve—they may, in fact, live more materially comfortable lives than ever before.

But the discussion today reveals another dimension: if that day truly arrives, the phrase “human pets” is not merely a metaphor. Because such people will have no reason to keep learning. And the difference between learning and not learning lies not only in the “mind” but in the “brain.” Learning gives the “mind” a prolonged process of friction and continuous trial and error—which is simultaneously a process of neural connections continuously forming anew. Such a person truly knows, truly understands; their life experience is genuinely full.

I even wonder: could this be the beginning of a bifurcation in human evolution, like the divergent branches that appeared in the course of ancient human evolution? One group continues to maintain long, arduous processes of internalized learning, and therefore continues to engage in creative intellectual activity—or at least supervisory intellectual activity—while the majority no longer needs to learn and is sustained by the former (in the name of democracy!). The serious consequence of not needing to work is not needing to learn—something few people mention. And the even more serious consequence of not needing to learn is losing one’s humanity. Will the long-term iteration of such people not produce evolutionary divergence?

Notes

-

See my dedicated discussion: The Minimal Structure of Thought. ↩

-

One of these, see my Lessons in Reading and Writing. ↩

-

Legal philosopher Brian Leiter, in a blog post titled “AI will destroy universities”, quoted at length from an angry statement by a philosophy professor: “I…teach political philosophy in a British university, so I have had to wrestle with the impact of large language models (LLMs) in one small domain: higher education. And here, my conclusion is simple. The threat they pose is existential… Yet it’s not just the problem of brazen cheating. In some ways, the more insidious threat LLMs pose to undergraduate learning is the promise of instant shortcuts. Why struggle through that difficult article, why read that complicated book, why force yourself through the problem set, when the internet can just summarize it for you? The answer to which is: because it is only through the struggle, the forcing, the wrestling with ideas for yourself, over the course of years, that you can truly train and develop your mind. Indeed, this is the reason university humanities degrees put such a high premium on writing. Writing is thinking. Until you have tried to put your ideas on the page, you never really know if you understand them and have them under control.” Ultimately, Leiter made a reluctant decision: “For thirty years, I gave take-home essay exams in my jurisprudence classes. No longer: now it’s a 4-hour in-class essay exam, no Internet access.” Meanwhile, an astrophysics researcher, in a blog post titled “The machines are fine. I’m worried about us”, cried out: “Centuries of pedagogy, defeated by a chat window.” He made a similar comparison, noting that even in a discipline like astrophysics, education is not merely about transmitting knowledge but about enriching life experience. Before I encountered that article, my thinking was that the trial-and-error learning process is crucial for the humanities. This piece from a natural scientist showed me that AI’s threat is shared across the humanities and the natural sciences. For all sciences and arts, the ultimate motivation that drives people to research and practice is that it provides a path to understanding the world and oneself, thereby attaining a full and rich experience of life. ↩